Bounded Rationality: the Case of ‘Fast and Frugal’ Heuristics

Rethinking Economics, 2020

Pluralist Showcase

In the pluralist showcase series by Rethinking Economics, Cahal Moran explores non-mainstream ideas in economics and how they are useful for explaining, understanding and predicting things in economics.

Bounded Rationality: the Case of ‘Fast and Frugal’ Heuristics

By Cahal Moran

How do people make decisions? There is a class of models in psychology which seek to answer this question but have received scant attention in economics despite some clear empirical successes. In a previous post I discussed one of these, Decision by Sampling, and this post will look at another: the so-called Fast and Frugal heuristics pioneered by the German psychologist Gerd Gigerenzer. Here the individual seeks out sufficient information to make a reasonable decision. They are ‘fast’ because they do not require massive computational effort to make a decision so can be done in seconds, and they are ‘frugal’ because they use as little information as possible to make the decision effectively.

A non-economic but illuminating example is the gaze heuristic, used by both dogs and people when catching a flying object such as a ball. In order to know the exact trajectory of the ball one would need to solve prohibitively difficult differential equations, so both us and our canine pals instead look at the ball while tilting our head at a particular angle (it doesn’t matter which, within reason) and run, adjusting our speed so that this angle stays constant. This means we will reach the ball as it lands and it is pretty successful, though it makes us run in an arc. It also leads to some slightly odd behaviours, such as backing away then stepping forward when the ball is thrown straight upwards (yes, you just realised you do that).

Some heuristics are extremely simple, for instance the recognition heuristic means people assume the city they recognise out of a pair has the highest population: most people choose San Diego over San Antonio for this reason. One surprising finding is that in many cases, these heuristics outperform more informed judgments. For instance, US students who were more likely to know both cities had a lower success rate than Germans, who accurately predicted San Diego simply because they had heard of it. This is a confirmation of the phrase ‘a little knowledge is a dangerous thing’: while knowing something outright is obviously the best place to start, if you don’t you might find that your fast and frugal heuristics are more reliable. Another example which can work this way is the majority rule - I’ll leave the reader to guess what this means.

Gigerenzer disputes that these cognitive shortcuts should always be called ‘biases’, since this supposes that there is a ‘best’ model from which they are deviating. To go back to the gaze heuristic, comparing people running in an arc to the ball to the ideal of solving differential equations might result in calling the former the ‘arc bias’ rather than understanding their behaviour. If you ‘corrected’ their behaviour with a ‘nudge’ to make them run in a straight line, you’d just mess everything up. Ultimately people making decisions may have different goals and frameworks to researchers, and assuming you know what they should do can cause you to misunderstand them.

When Fast and Frugal is Better

More complex decisions such as those in economics require what Gigerenzer calls a ‘stop and search’ procedure: look for the most important information but have a rule for when you will stop looking and utilise the information you have to make a decision. He gives an example of an experiment where people chose between investing in the shares of two different firms. Participants had information on a series of yes-no questions about the firms such as “Does the company invest in new projects?” and “Does the company have financial reserves?” and sorted through the answers the firms give to make a judgment.

There are different heuristics one can use within stop and search: if there is prior information about how useful each signal is (eg from past investments) then simply search through in order of how useful each piece of information has proven and stop at the first ‘yes’. So if a company’s financial reserves were the best predictor of its subsequent performance, and firm A had reserves while firm B did not, one would pick firm A. This is known as Take The Best (TTB). Alternatively, without prior information one can randomly select a certain amount of questions and simply add up the number of yes’s. This is known as Tallying.

However, a critical word is in order. Gigerezner draws from experiments to show that people generally use a ‘stop and search’ framework as outlined above, but what he doesn’t say is that some of the experiments he cites show specifically that TTB is not the relevant heuristic. Thus, while participants seem to search through information and stop before they have processed all of it, it’s not always clear how they do this. Certainly these subjects are using heuristics, but more research needs to be done before we can predict which ones they are using. As the authors of the experiment note, without this information the exercise risks becoming unfalsifiable because almost any process can be deemed ‘fast and frugal’ after the fact.

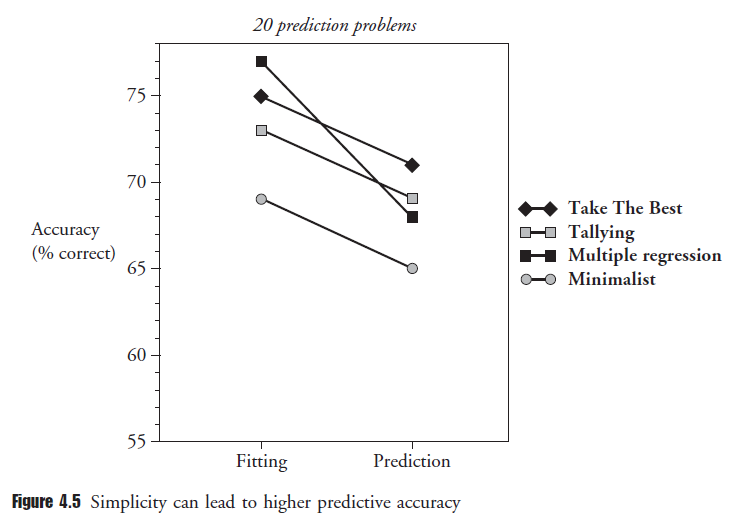

One of the most persuasive examples is an experiment which tested the predictive power of the TTB and Tallying versus the more computationally complex method of multiple linear regression. Across a wide range of examples including the selling price of particular houses, the mortality rate in US cities, and predicting high school dropout rates subjects were given prior information (eg for the last example they could see the % of low-income students, non-white students, SAT scores etc.). Astoundingly, while regression seems to fit the data better at first, once you make a prediction on a new set of data it tanks and the fast and frugal heuristics do better:

In Gigerenzer’s terminology, the heuristics have worse fit but they are more robust to changing environments, something that matters in reality. Thus, any social scientist interested in prediction should favour fast and frugal heuristics. This also has policy implications: as the authors put it, “a user of TTB would recommend getting students to attend class…but a regression user would recommend helping minorities”. Results show that the TTB user is more likely to be right even though the regression model fits better. (Note also the poor performance of ‘minimalist’, which just randomly searches until it finds ‘yes’ – this is an example of using too little thinking and information.)

Gigerenzer uses these results to chide economists and social scientists for their obsession with complex approaches. He summarises examples from medicine where doctor’s decisions using these heuristics have proven superior to logistic regression for detecting certain conditions without hitting the hospital’s resource constraints. In another paper he and Daniel Goldstein show that simple rules can lead to forecasts which are as good as or even better than those of experts and sophisticated models. I am not qualified to comment on the efficacy of these approaches in medicine, but it is in stark contrast to mainstream economics, which has responded to empirical failures by making models ever-more complex rather than changing the fundamentals. More generally it is divergent from a culture where it is thought that getting more information and using ever-more complex methods to process this information will allow us to make better decisions.

There is still so much to discover!

In the Discover section we have collected hundreds of videos, texts and podcasts on economic topics. You can also suggest material yourself!

Discover material Suggest material